Timeline

Sep 2025 – Dec 2025

Industry

EdTech / Higher Education

Role

Lead Product Designer

Team

8 Student Designers, Salesforce Experience Design Team

I co-led the design of a 0→1 AI product that helps students plan their academic future.

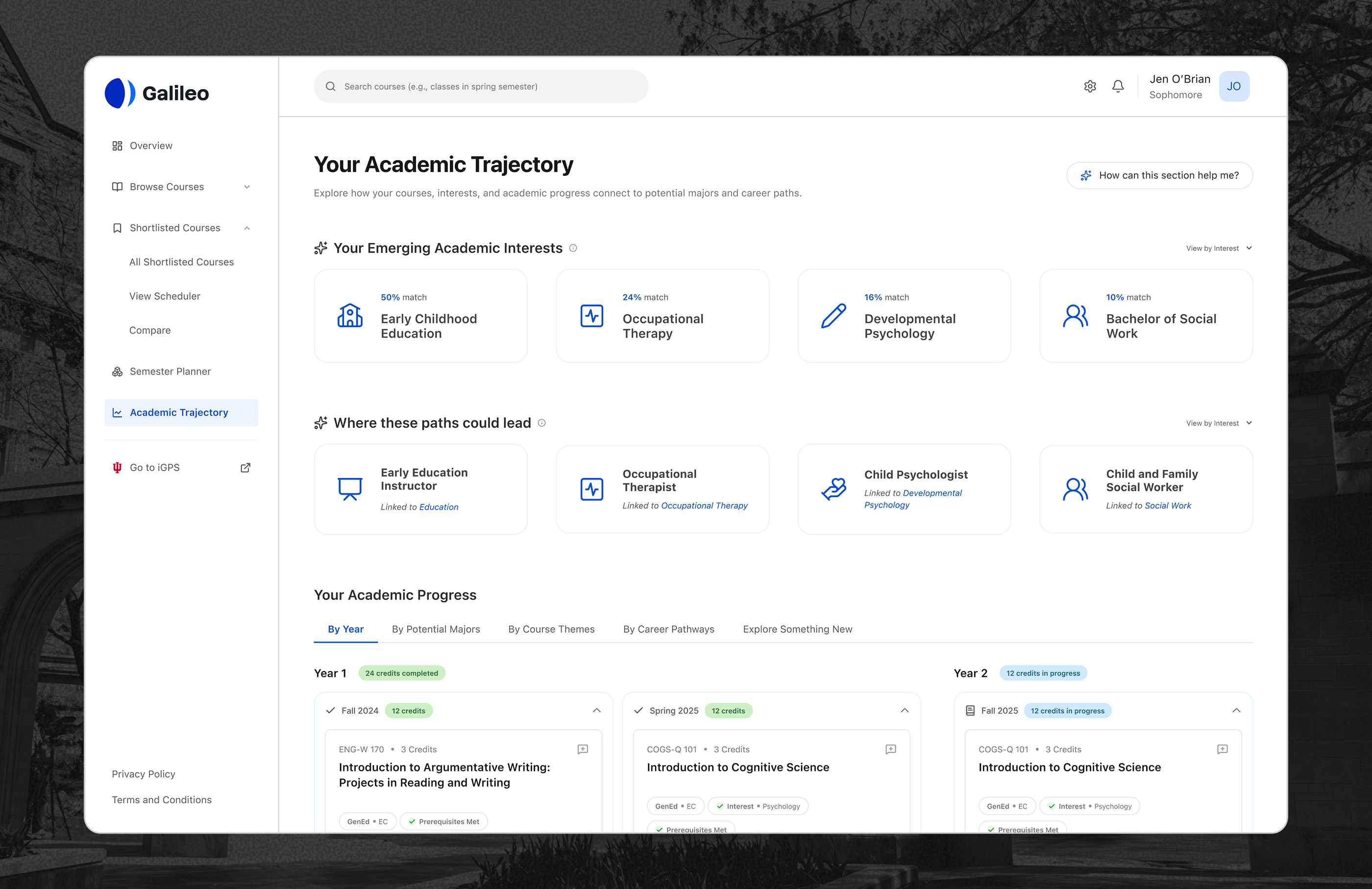

The Overview section of Galileo.

Context

Contribution

Interaction patterns

The decisions that shaped how Galileo thinks with students

Galileo had a lot of moving parts. Course discovery, shortlisting, scheduling and trajectory planning all had to work as a connected system. The decisions that mattered most were about restraint: what to show, what to hide, when to let AI speak and when to stay out of the way.

Understanding the problem

Students didn't lack information. They lacked a way to make sense of it

We conducted 16 student interviews, a literature review and a digital ethnographic study across Reddit and RateMyProfessor. What kept coming up was not a lack of information. Students had degree audits, course catalogs and enrollment portals. What they lacked was any way to connect that information to their own goals.

High-impact decisions over time

Early course choices shape workload balance, prerequisites and eligibility for majors down the line.

Information without interpretation

University tools surface requirements and data but don't help students understand what any of it means for their specific path.

Fragmented academic systems

Students piece together their academic picture from degree audits, course catalogs, enrollment portals and peer advice, none of which talk to each other.

Decision fatigue and uncertainty

Scattered information forces students to synthesize academic data manually, increasing cognitive load and reducing confidence in their choices.

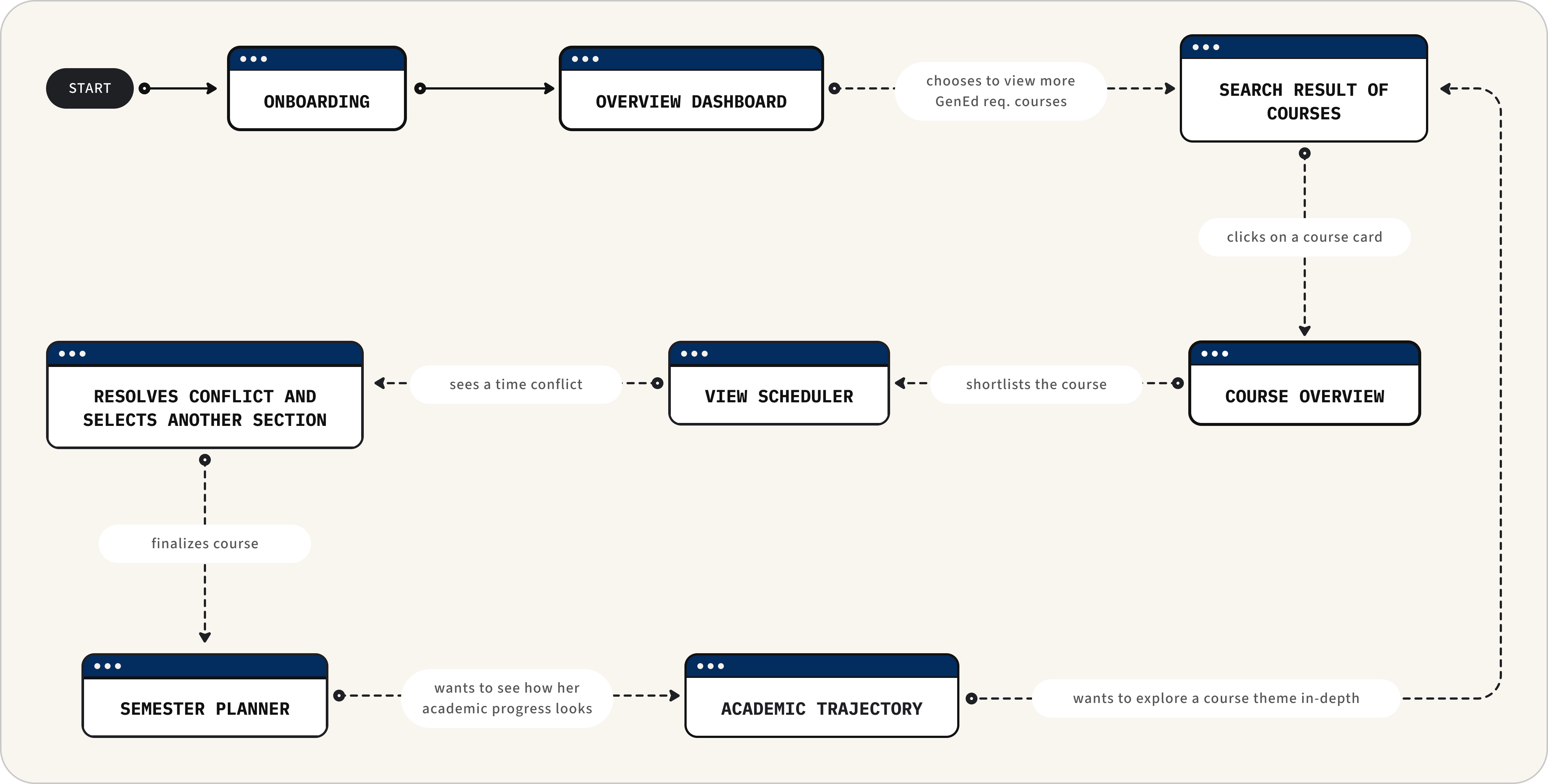

Five sections, one through-line

Five sections, one through-line: the student decides, AI informs

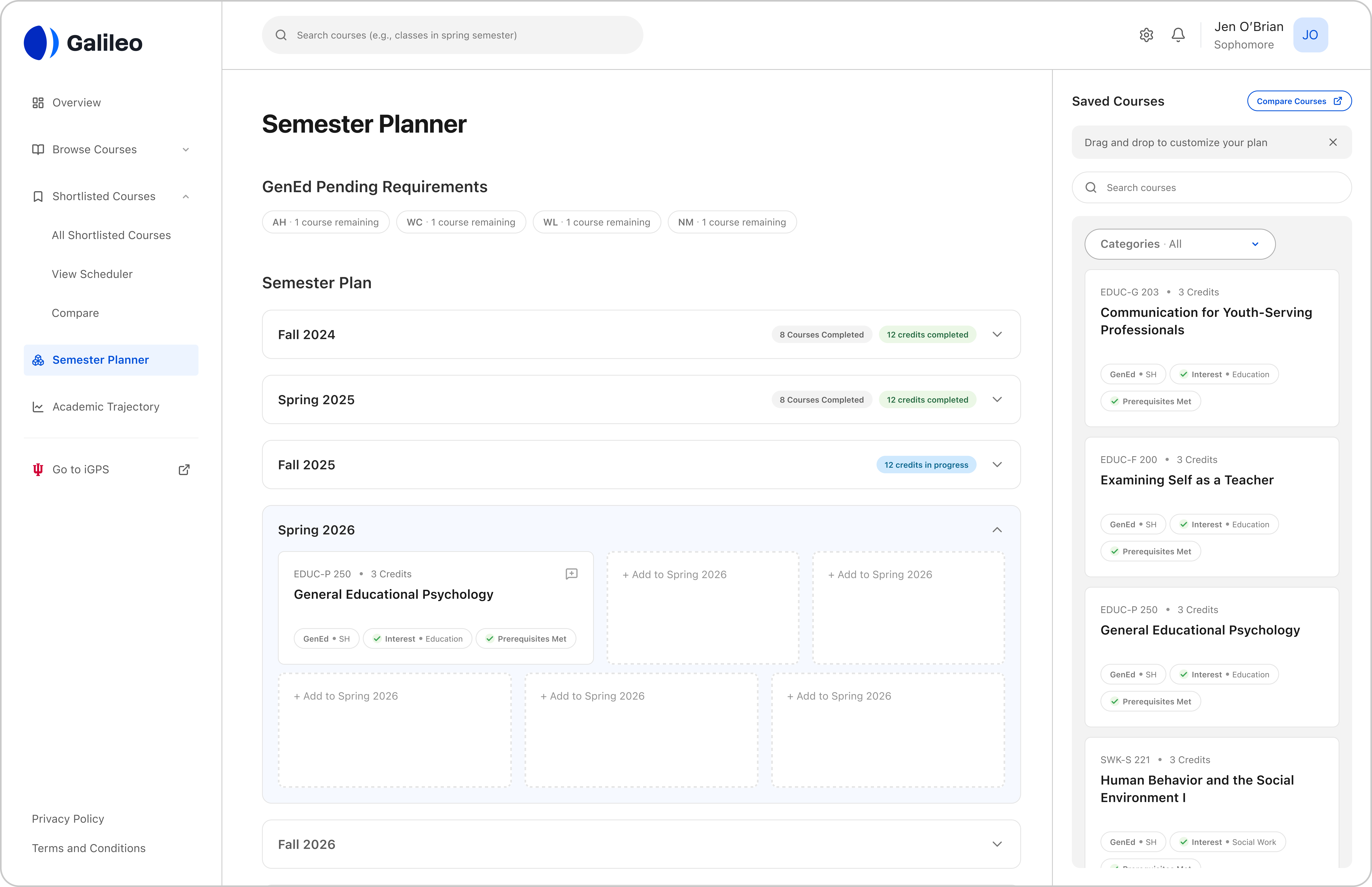

Galileo is built around five connected sections. Students start with an Overview that gives them a quick read on where they stand academically. From there they can browse and discover courses, shortlist ones they're interested in and plan their semester visually. The final section, Academic Trajectory, is where the system steps back and helps students reflect on where their academic choices are pointing them.

Example of a student's user flow while interacting with Galileo.

The sections are designed to work in sequence but don't have to be used that way. The flow follows the student, not the other way around.

Overview Dashboard

Designing a screen that knows when to stop

The Overview is the first thing a student sees when they open Galileo. It had to earn their attention without wasting it.

The Overview section of Galileo.

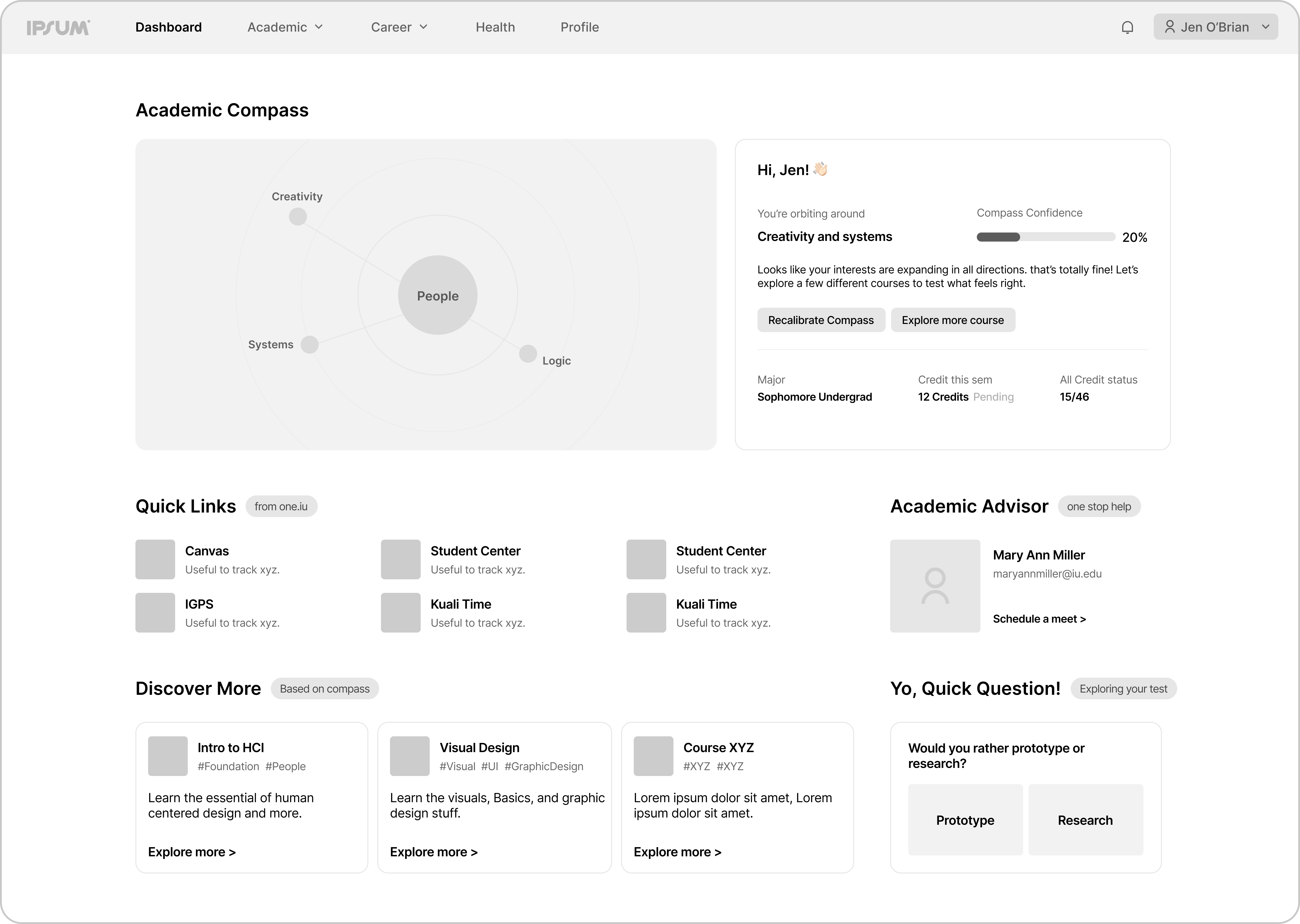

Our early iterations had a lot going on, an academic compass visualizing study trajectory, quick links to university apps, advisor contact, course suggestions, an onboarding quiz snippet and multiple CTAs pulling the student in different directions. It looked thorough but it wasn't useful.

First iteration of the Overview dashboard, with early elements that were later removed.

The Salesforce Experience Design team made that clear in the first review. There was no set action a student could take. That reframed everything. Our north star became one principle: every section needs to offer something actionable.

For the Overview that meant cutting almost everything. What stayed was GPA, a GenEd requirement tracker and an Academic Progress section. The only additional information is enrollment-related deadlines that are actually time-sensitive.

Academic Trajectory

The section where AI had the most to say

Academic Trajectory is where Galileo steps back and asks a bigger question, not just what courses a student has taken, but what those choices might be pointing toward.

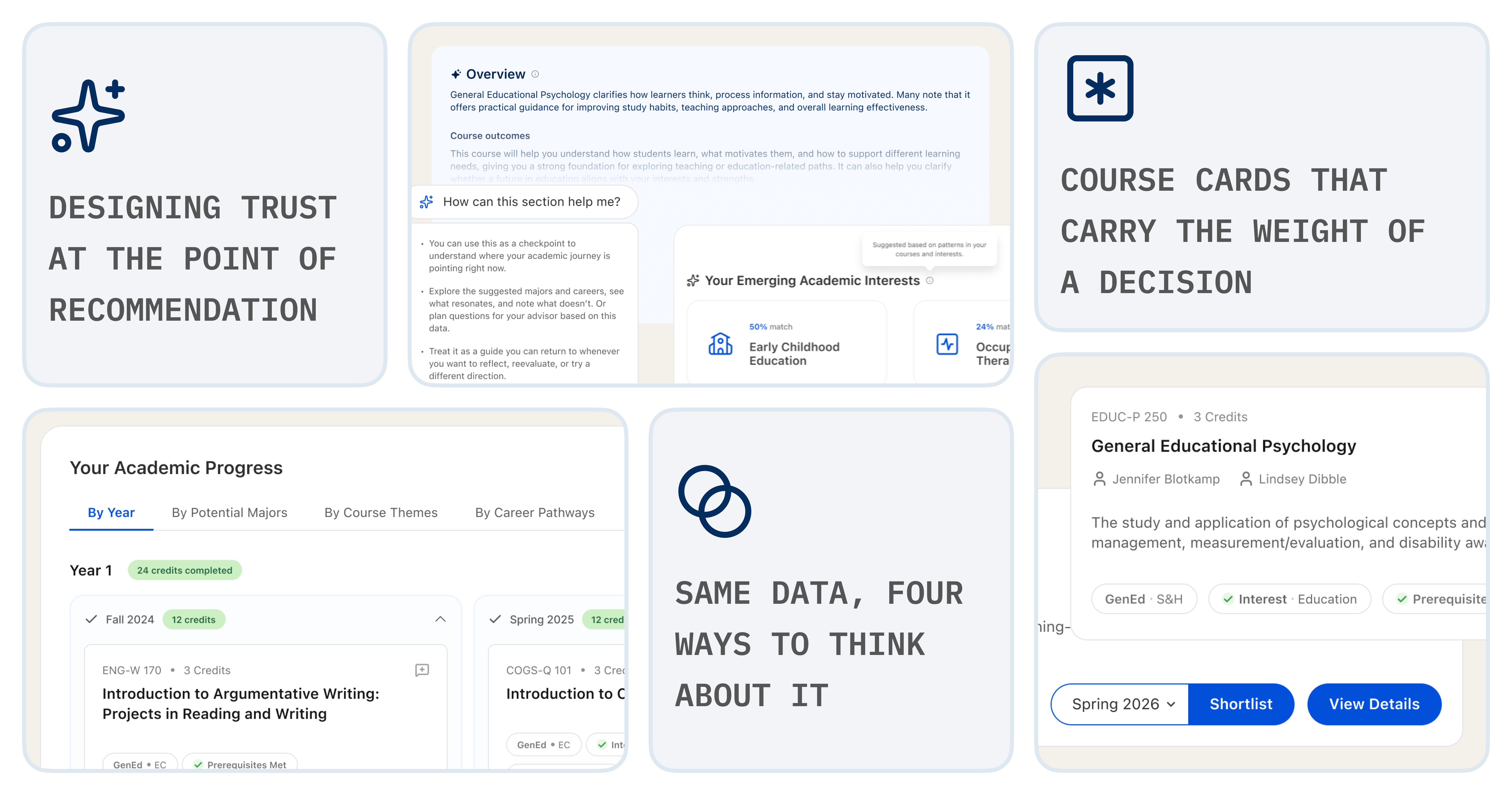

Four ways to read the same data

The Academic Progress section gives students four lenses to view their course history: by year, by potential major, by course theme and by career pathway. The data is identical across all four views. What changes is the interpretive frame.

This came directly from a research insight, students didn't lack information, they lacked ways to connect it to their own goals. A student trying to figure out if they should pursue Education as a major sees something very different when their courses are grouped by potential major versus grouped by year. Both views are true. They just answer different questions.

The Academic Trajectory section, showing four ways to view the same course data.

Designing for AI transparency

Because the entire Trajectory section is AI-generated, I had to be deliberate about how that was communicated. Students in our research were clear, they wanted to know when AI was involved and they did not want to feel like the system was making decisions for them.

The Summarize AI icon appears on every AI-generated element to identify it as such.

Disclosure copy beneath the major and career suggestions explains what data was used and how.

A contextual tooltip on the page header, 'How can this section help me?', reframes the entire section as a thinking tool rather than a verdict.

Summarize icon marking AI-generated content with supporting disclosure copy to inform students how the content was created and how to use it.

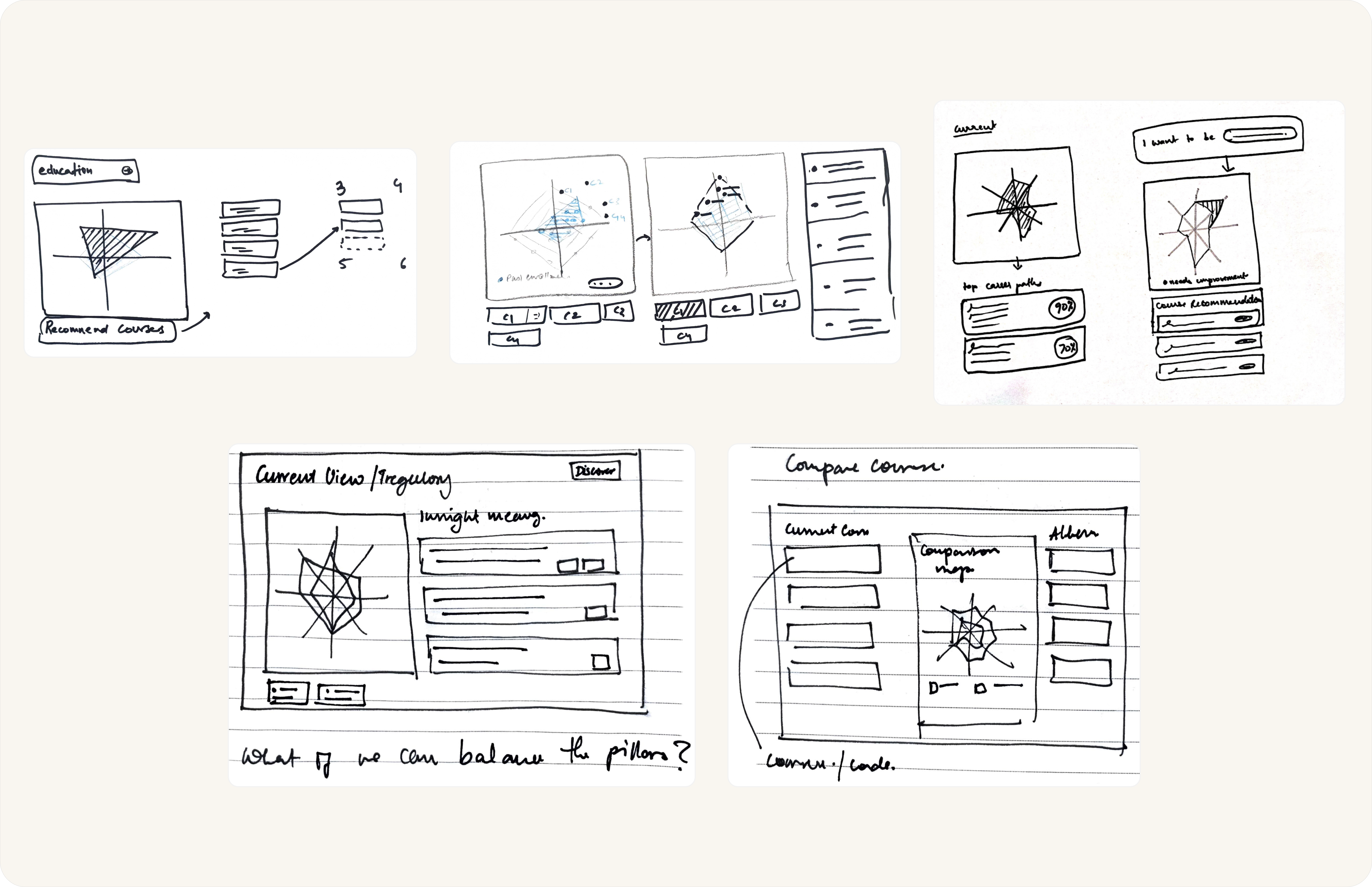

A radar chart. Then a donut chart. Then neither

For a long time I was stuck on how to visually represent the AI's major and career suggestions. The first idea was a radar chart, it felt like the right format for showing multiple dimensions of a student's academic profile. But we couldn't fix the axes. Every student's journey is different, which meant the chart would look different for every student and there was no consistent way to interpret it.

We moved to a donut chart. Simpler, more familiar. But the math wasn't adding up. During evaluations, students couldn't figure out how the percentages were being distributed across the segments. The visualization was once again creating confusion rather than clarity.

Iteration Round 1: Sketching out how can radar charts help visualize the academic trajectory of the student.

Iteration Round 2: Exploring donut charts for visualizations.

Visual language and cohesion

Making the system feel like one product

As one of two lead designers, part of my role was making sure that component decisions made in one section translated coherently across the whole product.

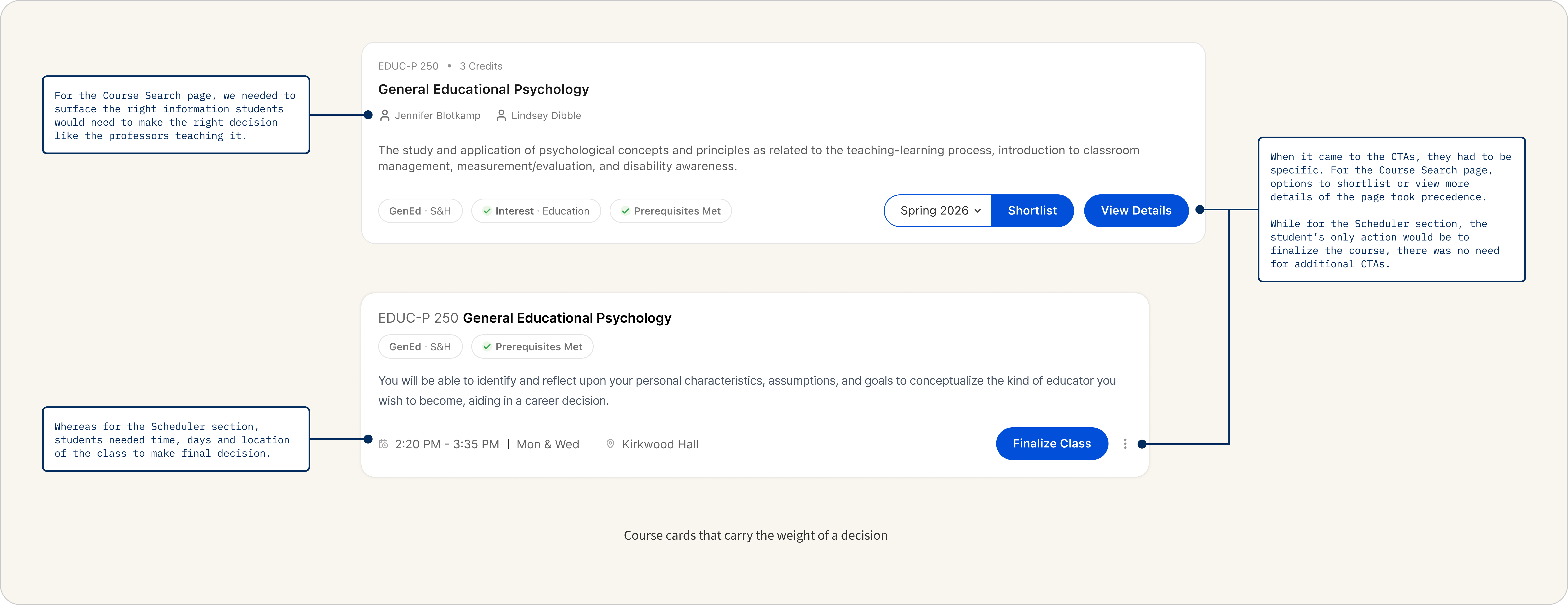

Course cards appear across Browse, Shortlist and Academic Trajectory and each surface asks something slightly different from them. The component had to be flexible enough to adapt without losing its identity. Getting that right at the component level meant the product could feel like a system rather than a collection of screens.

The CTAs on the card changed depending on what the student needed to do at that point in their journey. On the Course Search page, the priority was exploration, so the card offered two options, shortlist or view more details. On the Scheduler, the student had already made their choice. The only action left was to finalize. So that was the only CTA we gave them.

The rest of the product

The rest of the product, and where I handed off

I contributed to system cohesion across all five sections and the three below were led by other team members.

Students currently discover courses mostly through word of mouth. The Browse section gives that process structure, surfacing courses based on GenEd requirements, interests and peer popularity, with AI connecting student interests to relevant options without making the choice for them.

The course discovery flow of Galileo.

The Shortlisted Course and Scheduler flow of Galileo.

The Semester Planner section of Galileo.

Reflection

What I would do differently

Both formal validation points, a heuristic evaluation and a Salesforce Experience Design review, came late. By the time we were incorporating feedback, most structural decisions were already made. The sharpest insight came from peers at our final presentation: we had thought carefully about how AI should behave in Galileo. We had not thought carefully enough about where a human advisor fits in.

In hindsight I wish we had used that question to pressure-test our AI decisions earlier, not necessarily designed for it, but let it challenge our assumptions along the way.

The SF student team with Scott Pitkin, Director of User Experience at Salesforce.

What this project taught me

This was the first time I designed as part of a design team. Keeping visual and interaction language consistent across sections that different people were building in parallel is a different kind of problem, less individual craft, more communication, shared principles and knowing when to push back on a decision that works in isolation but breaks the system.